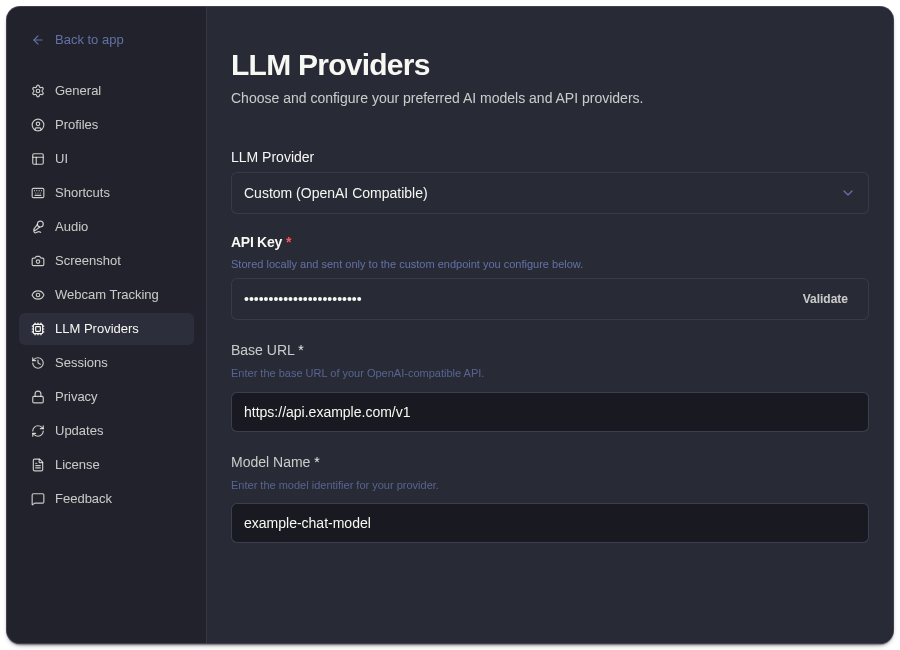

Use A Custom OpenAI-Compatible Endpoint

Use a custom OpenAI-compatible endpoint when your provider or proxy exposes an OpenAI-style API.

Required Fields

Section titled “Required Fields”| Field | What To Enter |

|---|---|

| Base URL | The provider or proxy API base URL. |

| API key | The key required by that endpoint. |

| Model name | The exact model identifier the endpoint expects. |

Setup Steps

Section titled “Setup Steps”- Open onboarding or Settings -> LLM Providers.

- Choose the custom provider option.

- Enter the base URL.

- Enter the API key.

- Enter the model name.

- Validate the configuration.

- Run a short test analysis.

Common Mistakes

Section titled “Common Mistakes”- Missing

/v1when the provider expects it. - Using a model display name instead of the API model identifier.

- Pasting a key for the wrong provider or proxy.

- Forgetting that provider retention, billing, and access rules are controlled by the custom endpoint operator.

Custom provider requests can include prompts, transcript context, screenshot-derived context, and custom questions.

Custom Endpoint Questions

Section titled “Custom Endpoint Questions”What makes an endpoint OpenAI-compatible?

Section titled “What makes an endpoint OpenAI-compatible?”The endpoint should accept OpenAI-style API requests for chat or responses using the base URL, key, and model name you enter in ExtraBrain.

What should I test after saving a custom endpoint?

Section titled “What should I test after saving a custom endpoint?”Run a short analysis with non-sensitive transcript or screenshot context. Confirm the endpoint returns a response and that the model name matches what your provider or proxy expects.